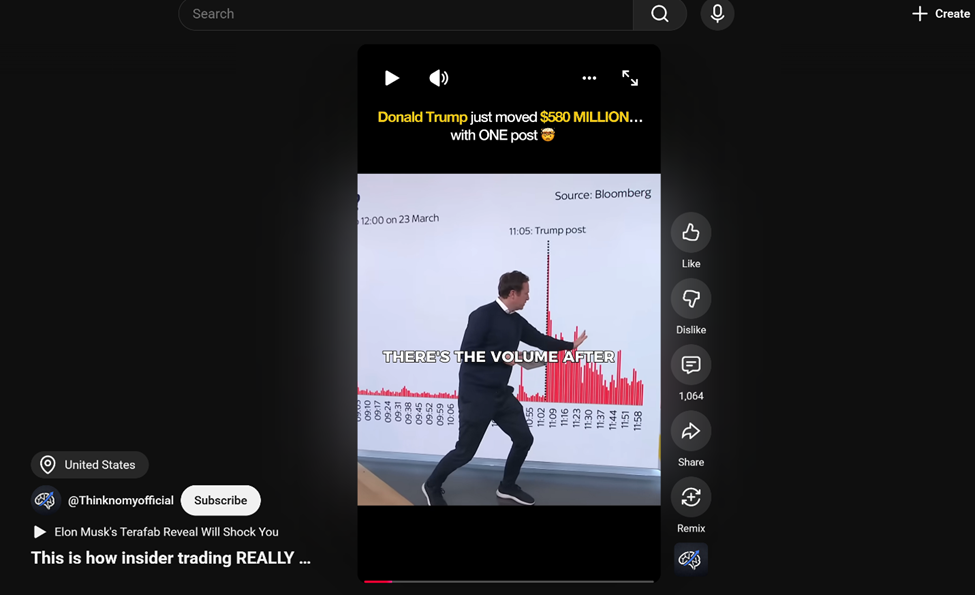

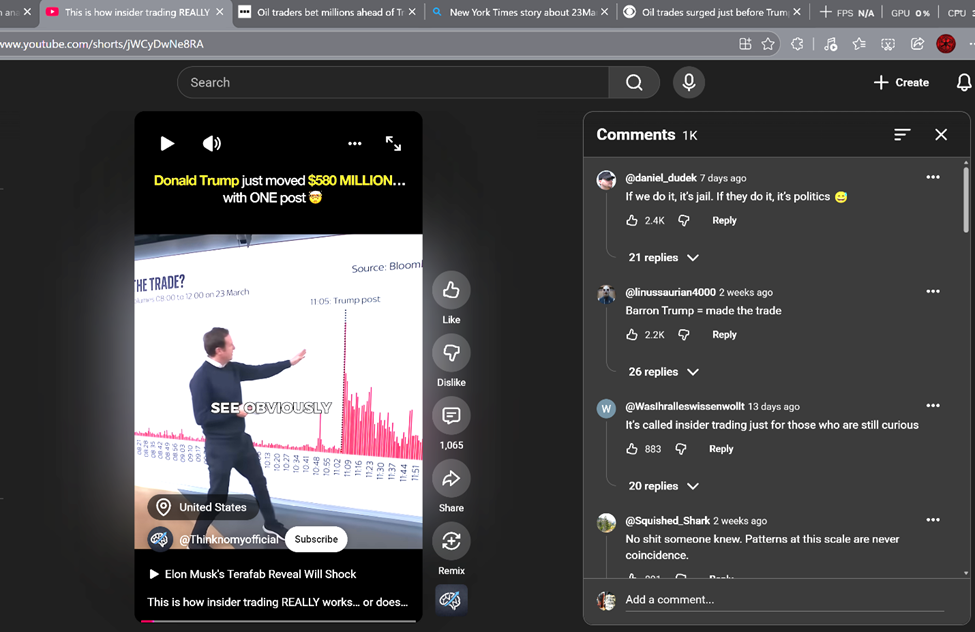

This is how insider trading REALLY works… or does it? 👀 #shorts #trading #donaldtrump

At first glance, it’s a quick, punchy, and honestly pretty convincing video. The speaker’s presence, the graphics, and the whole tone all work together exactly the way this kind of content is designed to spread. But that’s also what made me stop and want to take a second look at it. It’s not just the lack of context or sources, or how it feels more geared toward getting a reaction than actually informing me, the viewer. It’s the whole package: the layout, the title, and that catchy but mysterious background tune pulling you in. That’s why I decided to try and break this one down using a lateral reading approach.

Turns out, it’s a lot harder in practice than John from Crash Course Navigating Digital Information makes it appear, especially with these dang short-form videos. My two little brain cells almost caught fire….

Step 1: Stop – What is the claim, and what feels off?

The video presents a claim about that Donald Trump just move $580 million… with one post. Which is to say that Donald Trump knew this would happen and planned it.

The red flags I noticed immediately:

- No other source cited in the video except the graph in the video that states Source: Bloomberg

- Strong and confident messaging tone with impacting and mysterious soundtrack without any additional sourcing or specific evidence

- A very short format video, no longer than 34 seconds, and purely focused on Donald Trump, the trade, and the mystery of it all…

- Feels like it’s simplifying something far more complex than just a suspicious trading deal just before the President speaks of a delay in bombing if Iranian energy infrastructure.

This is exactly the kind of short, attention-grabbing videos that have been discussed in the module. Fast, emotionally charged, and incredibly easy to share without really thinking it through.

Step 2: Investigate the Source

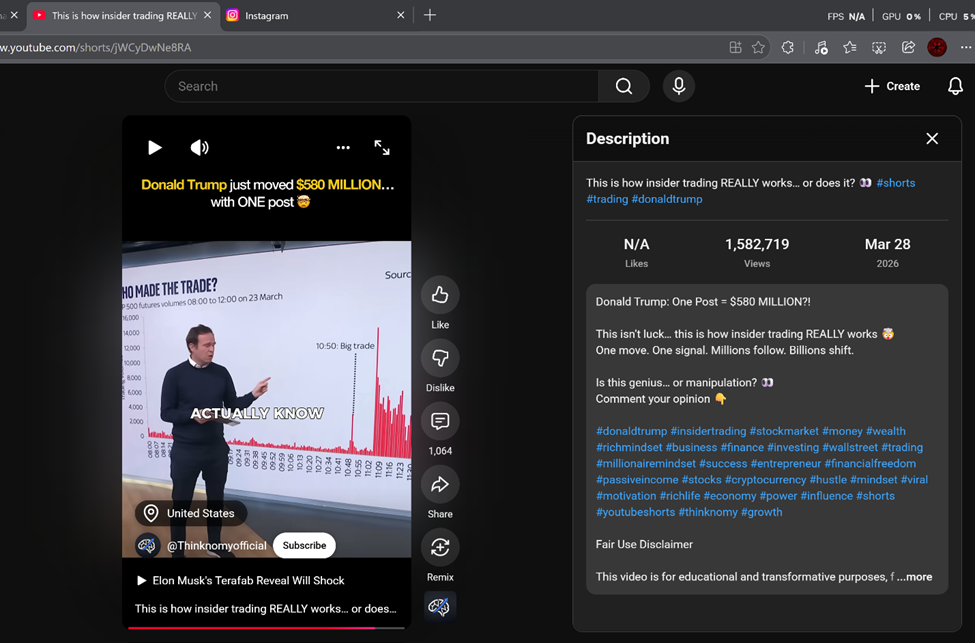

Next, I researched the YouTube channel itself.

Here are a couple of basic questions I wanted to answer:

- Who runs this account?

- Do they cite sources in other videos?

- Are they informational, opinion-based, or entertainment?

Here’s what I found:

- From what I found from the YouTube and Instagram descriptions, Thinknomy presents itself as a channel focused on wealth, entrepreneurship, discipline, and a success-driven mindset by using storytelling (short clips from movies, news, or other related media). They pride themselves in creating insights from “thinkers” of the day in order to inspire growth and achievement at the individual level. Their messaging focuses on turning ideas into action through consistency and intentional effort. The channel has approximately 4.66K subscribers and 47 videos on YouTube with a total viewership of 4.3 million and has been active in the YouTube space since December 26th 2025, yet the video in question has gained significant traction with 1.6 million views, 1,065 comments, and was posted on 28th March 2026. Thinknomy also maintains an Instagram presence; however, there is no clear information on who operates, funds, or determines its content, raising questions about transparency and credibility.

- No clear credentials or sourcing tied to the claim or the channel, for that matter.

That doesn’t automatically make it false, but it does mean I shouldn’t trust it at face value.

Step 3: Lateral Reading – What do other sources say?

Instead of staying on YouTube, I opened some new tabs and conducted a search:

Did Trump participate in or provide information that led to this big trade before he posted on Truth Social? Are we looking at a possible inside trading, or is this a coincidence?

Reputable News Source #1

I checked coverage from BBC News

👉 Oil traders bet millions ahead of Trump’s Iran talks post

Findings:

- Here’s a kicker: in order to review this story from the BBC, there’s a paywall. In fact, many of the “reputable news outlets” require some form of subscription or login with your email to access their content. WSJ, The New York Times, BBC, Reuters, and many others all have the model. What really got me was that if I even wanted to verify the graph in the video, I would need to pay to access the Bloomberg website.

- Provides me with nothing due to the paywall.

You’ve got to pay to read their “truth” — and subscribe just to question it.

Reputable News Source #2

I also looked at CBS News online

👉 Oil trades surged just before Trump’s post on Iran talks. Some experts are suspicious. – CBS News

Findings:

- The CBS News article reports a real spike in oil trading right before Trump made his announcement on the pausing of bombing of Iran. The article goes on to note that financial experts found the timing of Trump’s Truth Social posting and the sudden spike in trading “suspicious”. However, it stopped short of offering proof of insider trading. It does attempt to offer potential explanations, including coincidence and algorithmic trading. In my comparison to the YouTube short, the content creator simplifies this event into a 34-second video that may imply wrongdoing. While the CBS article does confirm this type of large trading is unusual, and its timing is strange, it does try to add context that the video doesn’t even attempt to engage. Overall, the reporting does not support the video’s tone and implied conclusion and highlights how missing context can turn a questionable event into a misleading narrative or worse, take a truth and distort it.

- It does, however, confirm that a significant and interesting trade regarding securities and crude oil did occur within minutes of Trump’s posting on the 23rd of March 2026; it does not contradict the video in that sense, but the article doesn’t imply Trump is the master mind behind the trades as the video’s title would suggest.

This source suggests the issue is potentially more complex than the video suggests.

Step 4: Fact-Checking Source

👉 Snopes

RumorGuard from the News Literacy Project

Findings:

- I couldn’t find anything on either of these sites.

- However, when I looked at different news outlets (those I could access without paying or giving up my email) that covered the $580M oil trades before Donald Trump’s post, they were consistent with the facts surrounding the matter and mostly the same details, but the ole spin on Trump didn’t stay the same. Outlets like CBS News and Fortune kept thing cautious, using words like “suspicious” without going hard in the paint. Meanwhile, others like The Daily Beast or Media Matters for America climbed over each other and did more than just hint at insider trading. Even the more extreme outlets accused Trump directly.

is this true? Donald Trump just move $580 million… with one post – Google Search

“Did Donald Trump really move $580 million with one post?” That’s what I tossed into the good ole trusty Google search machine, and the algorithm bequeathed me the following…

That after digging through a handful of “credible” sources, Google was kinda all over the place, offering most news sources leaning liberal, a couple hovering in the center, and one in the top ten you could consider conservative; however, all telling the same story with slightly different spins depending on their audience. Same facts, different flavor. Even AI tends to lean a little left of center, so yeah… I’m not exactly taking anything at face value here.

Step 5: Check Context

This part matters more than people truly understand.

I looked at:

- Date – Is this old information being reused?

- Location – Is this specific to one place?

- Missing details – What’s not being said?

In this case:

- So it’s not an old posting, and the event it’s showing is likely still developing. It focuses on the suspicious nature and timing of Donald Trump’s posting and the time the trades where exicuted. What stood out to me is what’s missing in the video: there’s no proof of who made the trades, no confirmation of insider knowledge, and no expert sources. It leans heavily on suspicious timing without real evidence. Overall, it grabs attention but feels incomplete and leaves out key details needed to fully understand the situation.

This is where a lot of misinformation lives—not in completely false claims, but in missing context.

My Humble Assessment

After walking through all of this, my conclusion is that the claim in the video is:

👉 Misleading but partially true.

Here’s why:

- It lacks detailed sourcing and context

- It oversimplifies a more complex issue and time

- Other credible sources neither specifically contradict. But do offer important context

- There is no specific data to support the claim

Why This Matters

This whole process took me about two and a half hours (way too many rabbit holes… I almost fell into one and never got out… lol), and honestly, it all but confirmed something I had already suspected: that most short videos are built to hit your emotions, not actually inform you.

Trying to use lateral reading here was a bit of a dead end for me. The content creator didn’t provide any sources, just vague claims and a “Is this genius… or manipulation? Googly eyes and comment your opinion,” which is fine if you treat it like entertainment or a thought exercise and just move on after. But as you’ll see if you spend just a few moments in the comments, you’ll quickly notice this video only validated and supported the viewers’ perspective and treated it as evidence of corruption.

One solid takeaway or perspective I would recommend that everyone consider: every outlet has some level of bias; political, religious, and ideological. That doesn’t make them liars or untrustworthy directly, but it does mean their content is or likely will be influenced. Bottom line: don’t look for just the perfect… or… your… truth.