I believe we can all agree that the concept of misinformation isn’t new. Humans have, at least since the written and spoken word, engaged in some form of misinformation. Heck, I’ve been around long enough to remember when bad info was primarily spread by word of mouth, print, and TV, not by today’s social media, system algorithms, and infinite doom scrolling in your favorite feed. The difference today and likely in the future, as technologies adapt and improve, is in the speed, access, and our ability to interact with this influx of “information”. You don’t just read or hear something these days; you can see it, react in an instant, and share it before you’ve even considered taking a moment to think the content through.

So in this module, we explore these three games and credibility tools in an attempt to offer us some insight into the motivations and means of how a person or entity could manipulate information and provoke reaction or action. I looked at two of them: RumorGuard and the Bad News game.

Bad News – The Way the Game Was Meant to be Played

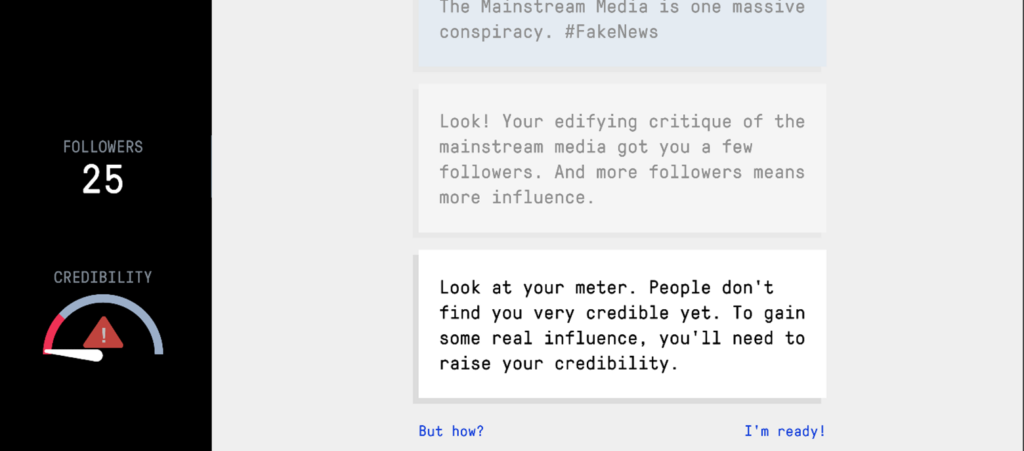

Bad News takes a completely different approach to exposing you to the machine of development and dissemination of information. You play as the bad actor. You’re building a fake news brand, gaining followers, and trying to maintain just enough credibility to keep people hooked.

For me, this was an interesting little game. As you start playing and earning followers, you start focusing on content that you’ve seen before, either figuring out how to spin it for more traction or questioning whether you believe any of it at all. It kind of forces you into this gray area: meet the intent of the game or be a better person and refrain. The game subtly pushes you to make decisions just to keep progressing, and before you know it, you’re playing along. You start to notice the tactics pretty quickly:

- Push outrage or keep it subtle?

- Go full conspiracy or stay believable?

- How far can I go before people stop trusting me?

And that’s the point, you quickly start to realize misinformation isn’t always just about outright lies and crazy conspiracies. It’s the art of framing the content, leveraging an emotionally desired response, and timing to maximize both reach and awareness in order to build on credibility.

In some research, I found an article (from Module 1) where Joan Donovan describes the lifecycle of media manipulation. Planning, seeding, responses, changes to the information ecosystem, and finally, adjustment. This game walks you through those stages, not directly but indirectly, by earning 7 badges or tools. You’re basically running your own mini-influence operation from behind the desk.

I also found a YouTube video where Kate Starbird suggests that misinformation spreads because of emotional appeal. We, the viewer, find a connection with the content, and what appears to be the most effective and fastest is the emotional connection. It’s difficult to discredit emotion, maybe the facts surrounding an emotional response, but not entirely the response itself. This game leans heavily into that when leveraging anger and outrage (emotional response). You can build engagement quickly and likely build solid loyalty from those who engage with the content. The developers describe these games as a form of “psychological vaccine,” and when using these tactics yourself, in the games, the theory is that we can start to recognize manipulation in the content we review.

Is it perfect? No, but nothing really is, and truth, I’m even skeptical of the game itself. I felt manipulated, and the game felt very linear, guiding me down a specific path with the perception of choice. That said, I am confident in my limited research that most misinformation involves some form of bots, algorithms, and bigger networks than this game can really demonstrate. But from a pure learning standpoint, I think it gives you some basic awareness of how bad actors could be using these techniques and tools as demonstrated to manipulate information in an unrestricted digital ecosystem.

RumorGuard – Wait, that’s not true?

RumorGuard is a “fact-checking” tool. It takes real viral posts, headlines, images, and social media claims, and breaks them down using things like verified evidence, context, and source credibility. RumorGuard offers a reasonable and plausible explanation of what’s wrong (or right) with the information and gives the viewer the sources to validate on their own terms.

Using it feels a lot like sitting through a solid brief. It’s straightforward, informative, and built on a logic matrix. What stood out to me is how much this lines up with research from Gordon Pennycook (read the abstract – it’s the only part that is free… lol) His abstract states “Together with additional computational analyses, these findings indicate that people often share misinformation because their attention is focused on factors other than accuracy—and therefore they fail to implement a strongly held preference for accurate sharing.”

In my reading of the statement, people often share misinformation not because they believe it, but because they’re not paying attention to what they are consuming. RumorGuard offers a means to take a moment and “pay attention”, that is, if you choose to do so.

So why don’t more people use tools like RumorGuard? Simple, it’s passive, you must want to question, step out of your comfort zone or bubble, and ask what I’m reading, watching, or feeling meaningful and honest. Why should knowing the truth be more important than my ideology and moral concerns? How do I use this new awareness?

My Final Thoughts – Questioning my questions

Between the Bad News game and RumorGuard, both attempt to build a sense of awareness. What really caught me off guard was how easy it was to use RumorGuard and other tools like it. I generally pull my news from several sources. I use the Ground News app, and I review information from several sources with an understanding of the source’s motivations. If we genuinely want to learn and be better informed, tools like RumorGuard and others like it are a start. If we take the moment to see each other and not the content, we might all find common ground on the important matters of our coexistence.

Leave a Reply