If you’re a Seabee or any military service member for that matter, this isn’t just another OPSEC blog post. This is about protecting yourself, your family, your shipmates, and the mission in an environment where most of us interact with social media in our daily routines, but rarely stop to think about – our digital feed.

The reality is simple shipmates: most online platforms are not designed to prioritize truth. Instead, they reward speed, emotion, repetition, and engagement. This means the content you see often in your X account, TikTok, Instagram, or whatever social media platform you choose to use is not concerned about accuracy; it’s about your engagement and reaction. Remember, everyone who’s online isn’t your friend! And in a community like ours, which is built on trust, rapid communication, and shared information, that can create real risk. What makes us effective in the field, underway, or at home can make us all vulnerable online.

This blog breaks things down in plain, no-nonsense terms. It focuses on three key forces shaping what you see: algorithms that push emotionally charged content, bot networks that create the illusion of popularity, and AI-generated media that can look real enough to fool anyone at a quick glance. The goal here isn’t to scare you or bash technology. It’s to give you a simple, practical framework so you can slow down, verify, and make informed decisions before you like, share, or act. Because in our line of work, bad information isn’t just annoying… like being 30 minutes early for muster… it has a real impact on trust, our readiness, and even ourselves.

Hey Seabee!

These 3 Things Could be Shaping Your Feed

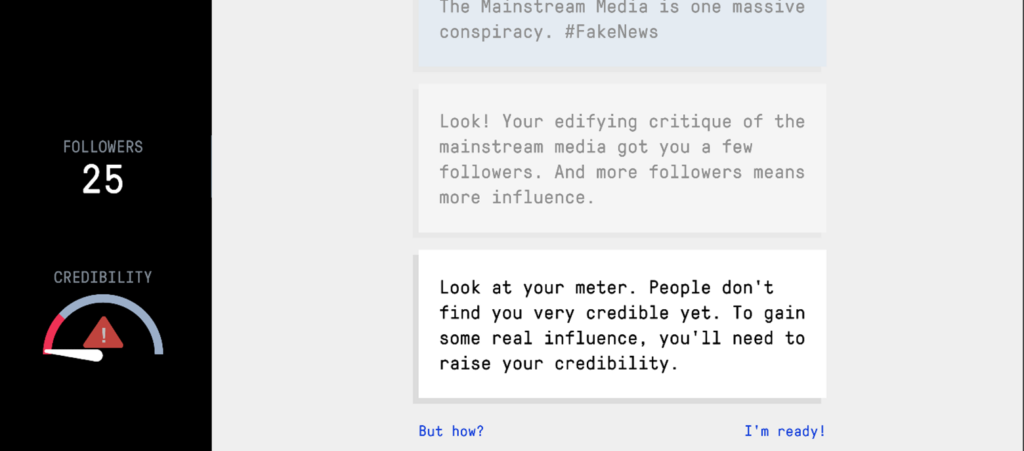

1. Algorithms

Algorithms push what gets reactions.

Not what’s true.

So if something:

- makes you mad

- scares you

- hits your gut

…it’s more likely to show up again and again.

That’s how false info starts to feel real.

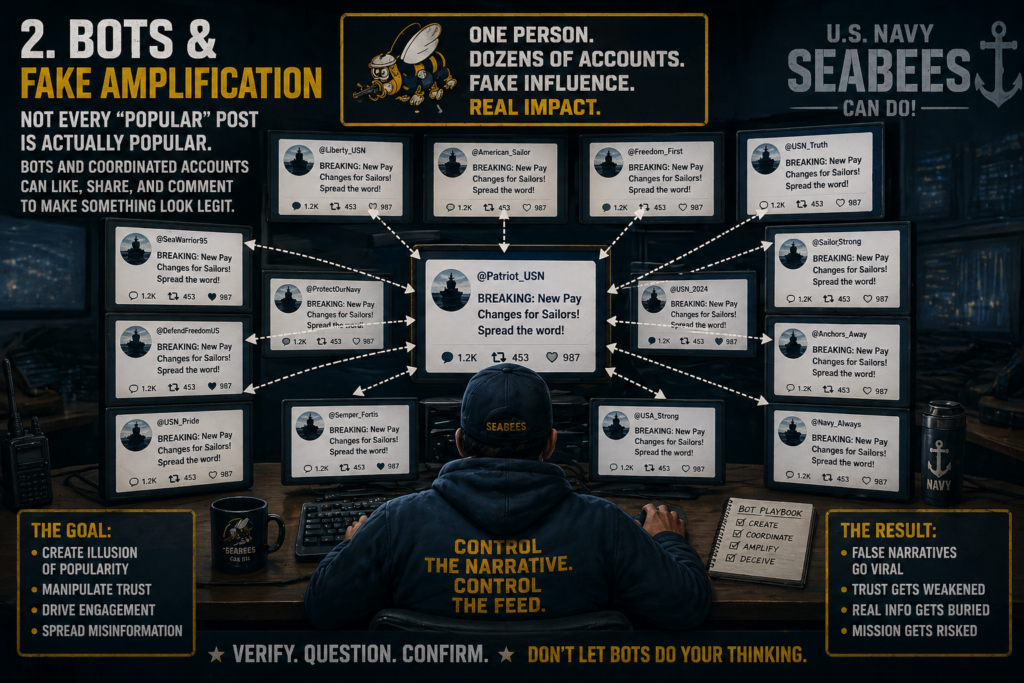

2. Bots & Fake Amplification

Not every “popular” post is actually popular.

Bots and coordinated accounts can:

- Like

- Share

- Comment

…to make something look legit.

Repetition and perceived popularity are key drivers of misinformation going viral.

Translation:

If it looks like everyone’s talking about it… You’re more likely to believe it.

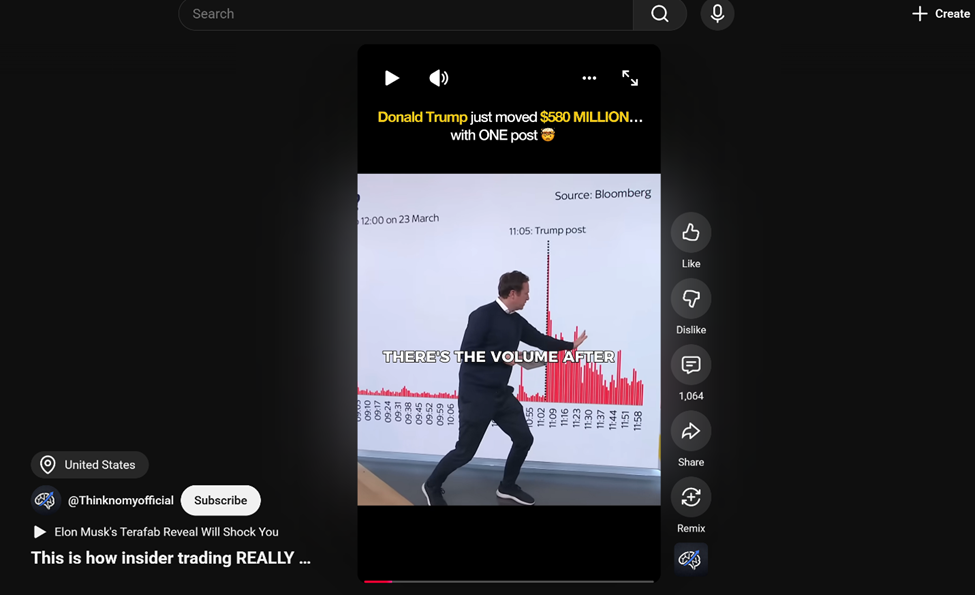

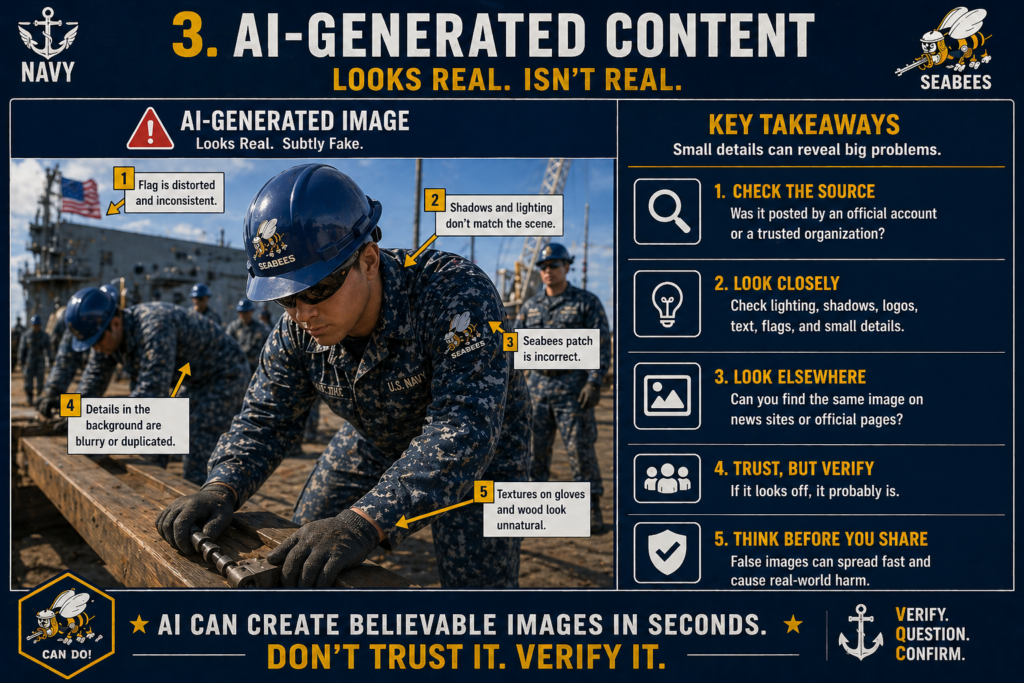

3. AI-Generated Content

This is the new frontier.

AI can now create:

- Fake military images

- Fake videos

- Fake statements

- Fake “news clips”

And they look real enough at a glance.

This is media manipulation, it isn’t just editing photos and video anymore… It’s full-on creation.

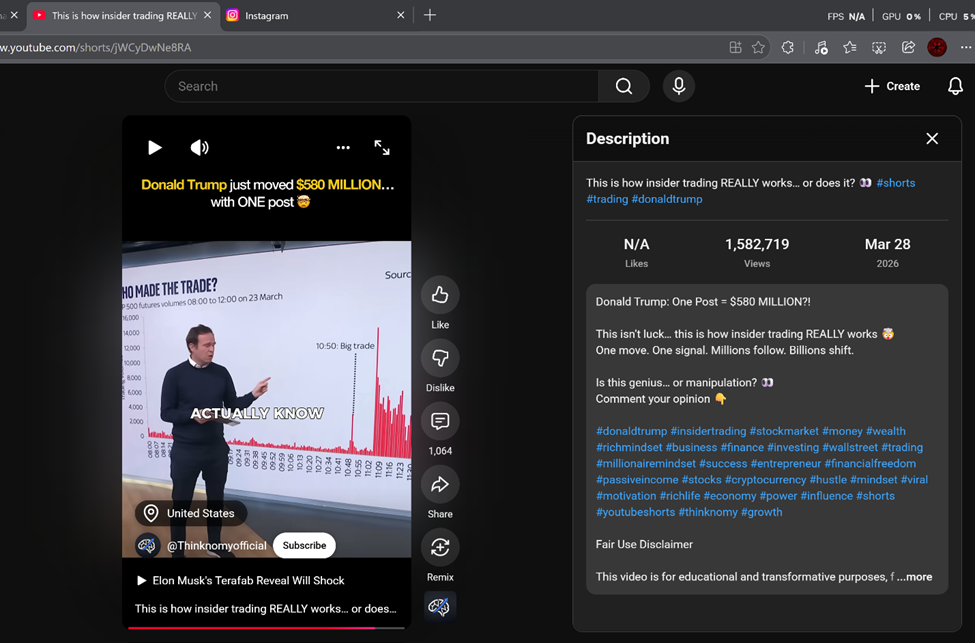

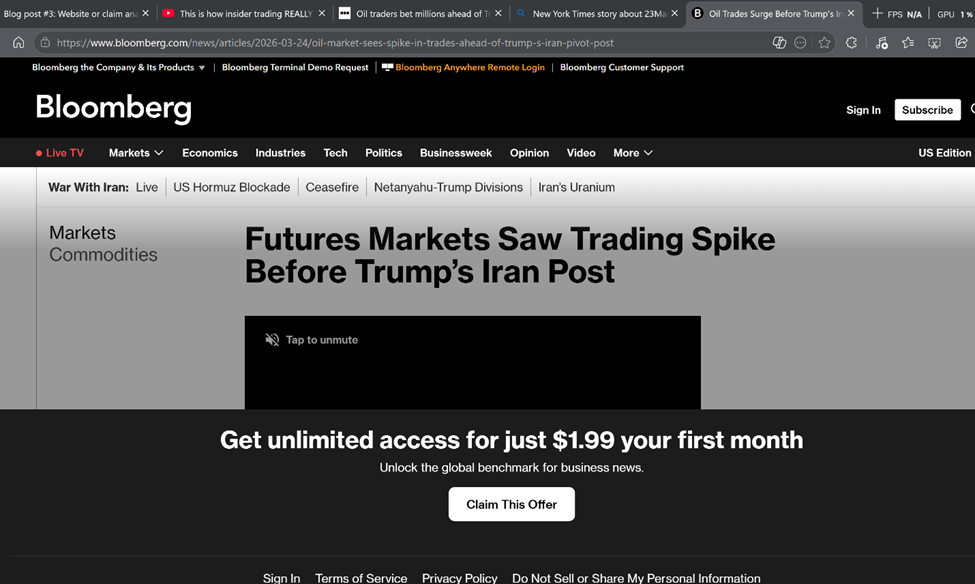

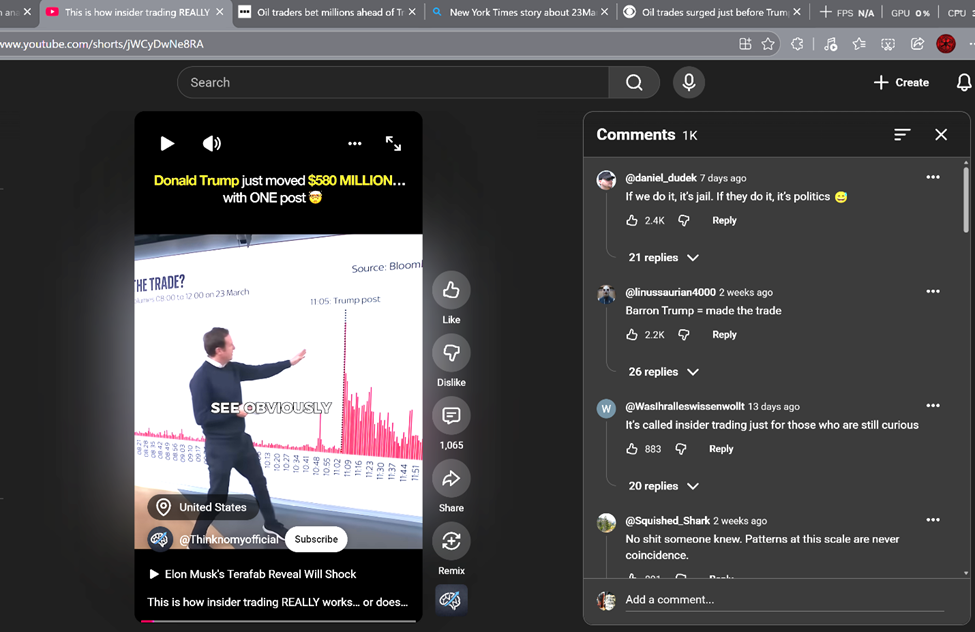

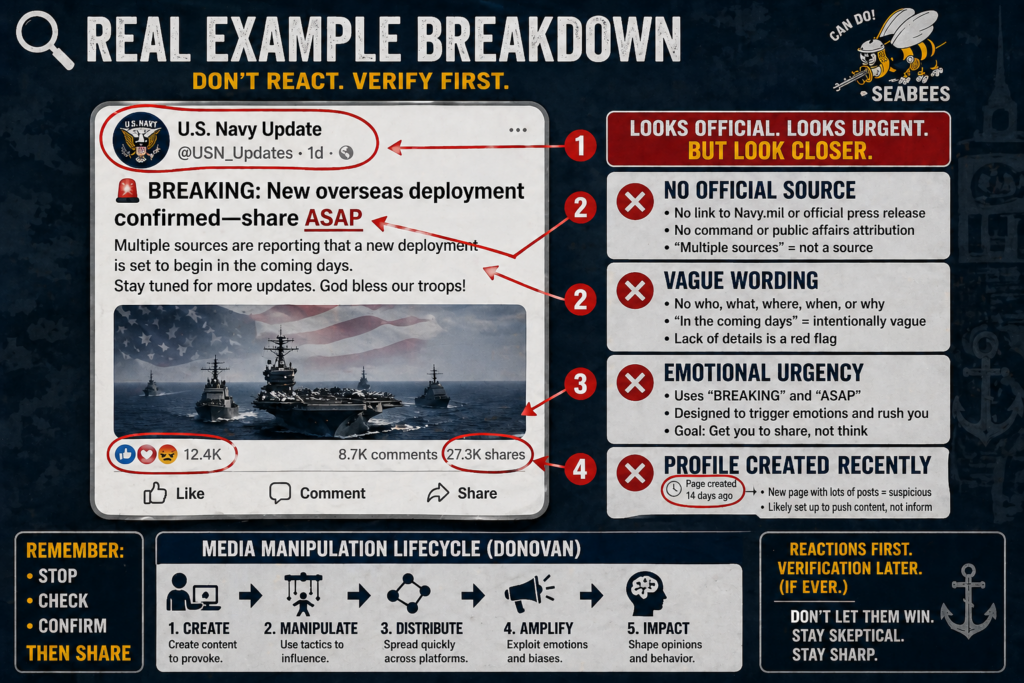

Real Example Breakdown

Let’s say you see this:

“BREAKING: New overseas deployment confirmed—share ASAP”

Looks official right? Has a military-looking profile, tons of shares, ships underway.

But look closer:

- ❌ No official source

- ❌ Vague wording

- ❌ Emotional urgency (“share ASAP”)

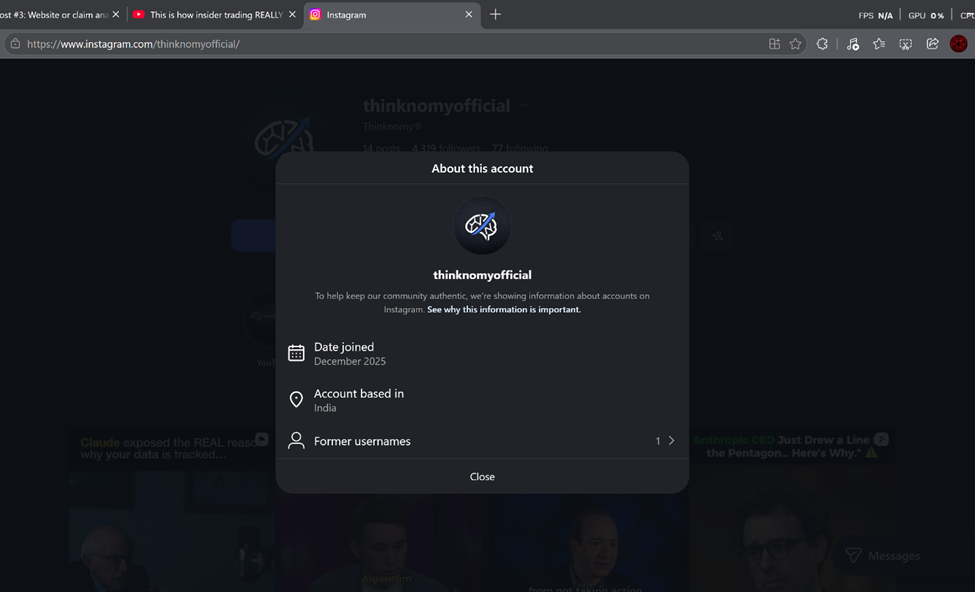

- ❌ Profile created recently

That’s straight out of the media manipulation. Content is designed to trigger reactions first, verification later (if ever).

Our adversaries use this tactic to validate personnel and asset movement, including troop deployments!

Don’t fall for that ole trick, “loose lips sink ships!”

Remember OPSEC!

What Can you do About IT? (Your Simple Framework)

That’s it.

You don’t need to be a cyber expert—you just need to slow down and validate.

If it doesn’t seem right, report it to your Security Officer ASAP!

Bottom Line Up Front

Your feed is not neutral.

It’s shaped by:

- Algorithms chasing engagement

- Bots faking popularity

- AI creating believable content

- Adversaries looking for Intel

- Your interest and content you engage with

None of that is going away.

So the responsibility for OPSEC shifts to you.

Not to panic. You don’t have to distrust everything.

Just:

- Slow down

- Verify

- Stay sharp

- Report

🔚 Final Thoughts

In uniform, we are trained to question, verify, and act with intent.

That shouldn’t stop when we pick up our phones, post, or share.

Stay skeptical, stay informed… and don’t let the feed do your thinking for you.

Want to know more – Try these links below

- Pennycook, Gordon et al. – Why people share misinformation (attention vs accuracy)

- Starbird, Kate et al. – Misinformation is more than just bad facts: How and why people spread rumors is key to understanding how false information travels and takes root

- Donovan, Joan – The Lifecycle of Media Manipulation

- Federal Trade Commission – Imposter scams targeting veterans and servicemembers

- U.S. Department of Defense – These Social Media Scams Affect the Military

- RAND –Fighting Disinformation Online (Tools)